What is AlphaGo?

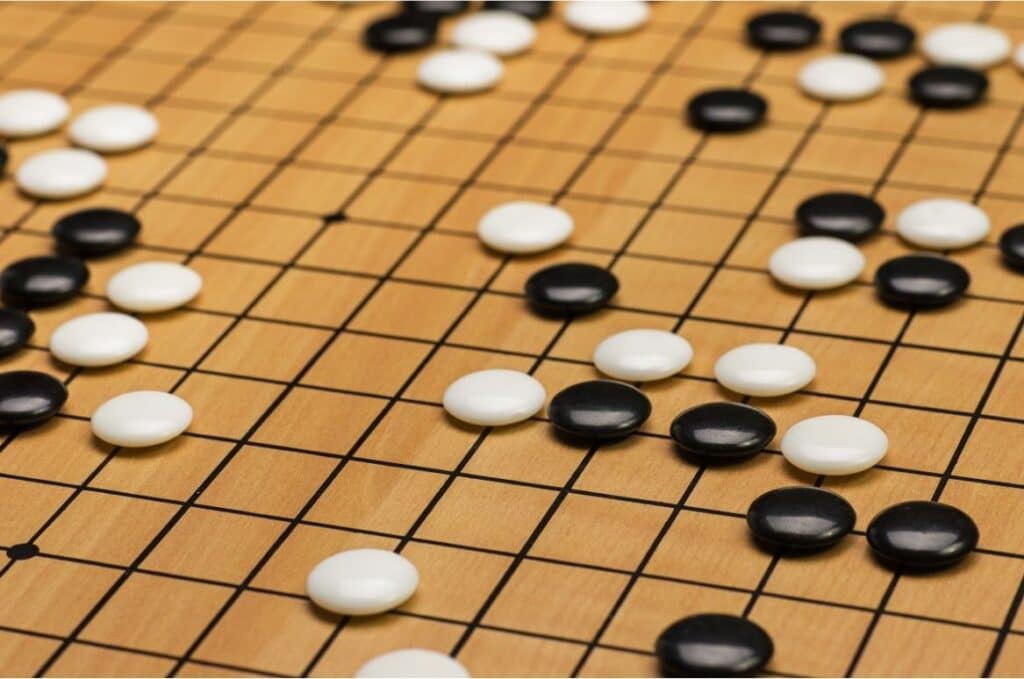

AlphaGo is a computer programme that masters the Japanese board game Go. For this it combines machine learning and neural networks with each other. It was developed in 2015 by Google DeepMind, with the aim that a Artificial intelligence masters the game of Go.

AlphaGo forms the basis for AlphaGo Zerowhich in turn serves as the basis for AlphaZero serves.

The significance of AlphaGo

The challenges for the development of AlphaGo were the complexity of the game Go itself. As early as 1997, the IBM programme Deep Blue succeeded in beating the then world chess champion Garri Kasparov under tournament conditions. Next, an AI was to master the game of Go. But While in chess there are on average 35 possible moves in a game situation, in Go there are 250.. In total, Go 10 to the power of 170 possible match constellations on the 19×19 board, which is not manageable with the alpha-beta search used until then. Go programmes in the 1990s thus only reach the level of amateur players.

In 2006, Go programmes like Zen and Crazy Stone used the Monte Carlo algorithm (randomised algorithm that arrives at a decision with random intermediate results) and were thus able to beat very good amateurs. DeepMind went one step further and additionally used neural networks. In 2015, it beat the European champion Fan Hui 5 to 0 and was the first AI to beat a human professional player in Go.

How does the algorithm work?

The decisive The difference between AlphaGo and all Go programmes developed up to that point is the use of the Monte Carlo algorithm in combination with two kinds of neural networks.

The policy network determines the best possible next move. The value network, meanwhile, calculates who will be the winner of the game and gives estimates of the next move based on this. Thus, the algorithm only has to consider the most probable moves and simulate them to come to a decision.

The basis for the success of AlphaGo is the Application of machine learning, in particular supervised learning and reinforcement learning.. The programme was first trained with a database of numerous amateur games to gain an understanding of the human game. Then it played several thousand games against itself to learn from its own mistakes and further increase its playing strength.

AlphaGo vs Lee Sedol

From 9 to 15 March 2016, AlphaGo competed against Lee Sedol, one of the best Go players in the world. Sedol had won 18 international titles and held the highest possible rank of 9th Dan. At the time of the match, his Elo rating was 3533, which placed him 4th in the world rankings.

The Go experts and Sedol himself agreed that AlphaGo could not win against him. But after the artificial intelligence had won the first game, the first doubts arose. Sedol himself said after the tournament that he was surprised at how creatively AlphaGo executed his moves. And also that he felt very pressured by the AI. In the end, AlphaGo won 4 of the 5 games. DeepMind thus proved what an artificial intelligence is capable of.

About the Significance of AlphaGo's victory over Lee Sedol, a documentary was also made in 2017. With AlphaGo - the filmDirector Greg Kohs discusses the history of the development by the DeepMind team and, of course, the tournament with Sedol. He also takes a look at the Asking what artificial intelligence can teach us about humanity.

AlphaGo Zero

Alpha Go Zero is the further development of AlphaGo While AlphaGo learns Go through human games, Zero skips this step. It Knows only the rules and conditions of the game and learns exclusively by playing against itselfwith the help of reinforcement learning.

In addition, software and hardware have been changed somewhat to make AlphaGo Zero more efficient. For example, the two neural networks have been combined into one and the algorithm has also been revised.

After just three days, Zero was stronger than the version of AlphaGo that Lee Sedol was able to defeat. And after 40 days of training, Zero also beat AlphaGo Master, formerly the last and strongest upgrade of AlphaGo.

AlphaGo Zero later became the Basis for AlphaZerowho also mastered the three games of Go, Chess and Shogi only by playing against himself.