Why, AI? The Basics of Explainable AI (XAI)

- Published:

- Author: Dr. Luca Bruder, Lukas Lux

- Category: Deep Dive

Table of Contents

The Magic of the Machine

People can easily explain most of their actions: we cross the street because the light is green. If we want an explanation for someone else's actions, we usually just ask.

Our own introspective experiences with our bodies, as well as our observation and interaction with the environment, allow us to understand and communicate our inner motivations. Our own introspective experiences with our bodies, as well as our observation of and interaction with the environment, allow us to understand and communicate our inner motivations. The reasons behind an AI (artificial intelligence) decision, on the other hand, are much more difficult to comprehend. An AI model often arrives at a conclusion based on completely different causes than a human would.

The information about why an algorithm makes a particular decision is often contained in an incredibly complex binary yes-no game that is initially opaque to humans. Understanding this game in a way that allows us to comprehend and describe it is an important challenge for users and developers of AI models, because AI applications are becoming more and more a part of our everyday lives.

Especially for users without in-depth background knowledge of how AI models work, the decisions made by these models can seem almost magical. This is possibly one of the reasons why many people not only distrust AI, but also view it with a certain degree of aversion. Improving the interpretability of AI models must therefore be an essential part of the future of these technologies.

Reliable systems must be explainable

Particularly in safety-critical applications for AI, it is extremely important for legal and ethical reasons alone to be able to understand algorithmic decisions. Just think of autonomous driving, credit scoring, or even medical applications: Which factors lead to which results, and is that what we want? For example, we don't want people to be denied credit because of their background, or someone to be run over by a self-driving car for the wrong reasons. Even for less critical decisions, the interpretability of the model plays a role, as already described: whether it's to build trust in the model's predictions or simply to find out the reasons for the value of a forecast.

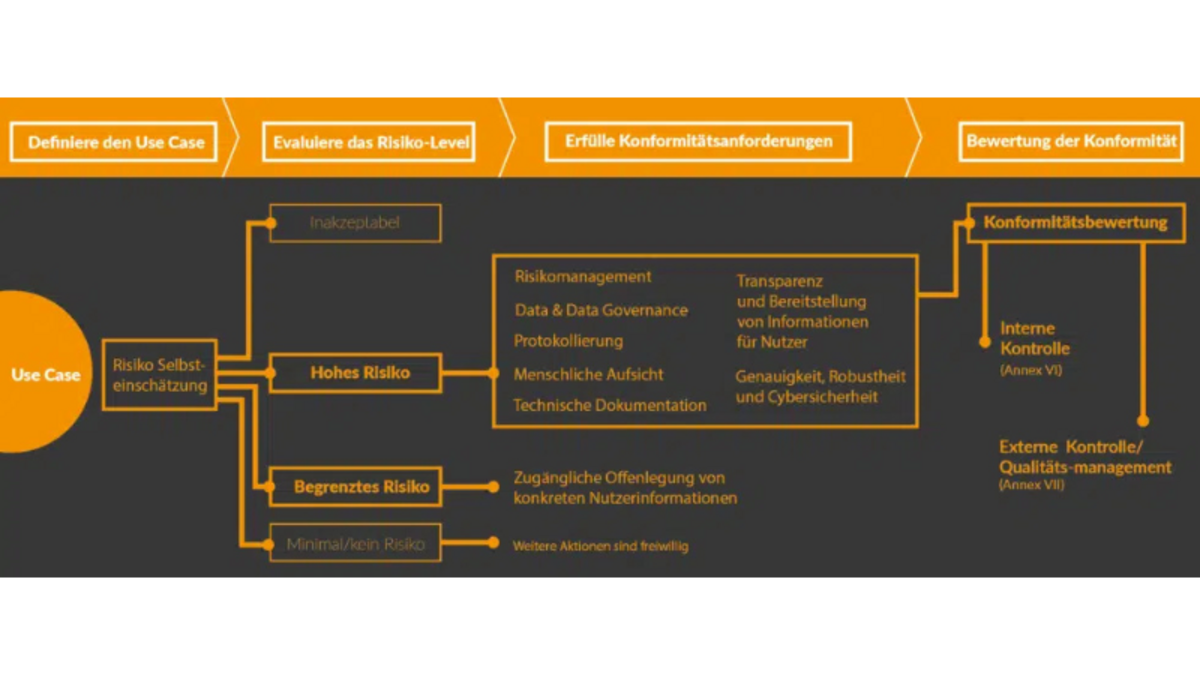

Therefore, the use of AI-based systems is subject to the basic rules of any other software system in order to avoid misinformation and discrimination and to ensure the protection of personal data. In addition, the European Union is discussing a draft law for the specific assessment and regulation of AI systems – the AI Act. The draft, which is expected to come into force in 2025, provides for AI systems to be classified into four categories: no risk, low risk, high risk, and unacceptable risk. In order to use AI as a company, the use of high-risk systems is necessary in many industries: all applications that affect public safety or human health are considered high-risk systems here – however, the definition is broad.

Managing and evaluating AI use cases within your own company is time-consuming—especially when AI is used productively in the company. Clear rules and testing procedures are lacking. Risks can be minimized using a use case management tool such as Casebase. For example, for the following scenarios:

- Unmonitored AI applications in operation

- Undocumented AI use cases

- High-risk or unacceptable risk systems not on the radar

- Penalties from regulatory authorities

- Damage to image

Requirements such as detailed documentation, appropriate risk assessment and mitigation, and supervisory measures are essential for the sustainable explainability of models. This is the only way to meet the high demands placed on AI use cases.

The Black Box

Above all, Deep learning is often referred to as a black box. A black box is a system in which we know the inputs and outputs, but have no knowledge of the process within the system. This can be illustrated quite simply: A child trying to open a door must press down on the door handle (input) to cause the latch (output) to move. However, the child cannot see how the locking mechanism inside the door works. The locking mechanism is a black box. Only by shining a light inside can we shed light on what is going on.

Why Explainable AI?

So, we should actually be used to dealing with systems that are not transparent to outsiders in many situations in life. We are constantly surrounded by sheer endless complexity. We pick an apple from a tree even though we don't understand exactly which molecules interact with which during its growth. It seems as if a black box in itself is not a problem. But it's not that simple, because throughout our evolutionary history, we humans have learned through trial and error what we can trust and what we can't. If an apple meets certain visual criteria and smells like an apple, we trust the product of the apple tree. But what about AI?We cannot always draw on a thousan

d years of experience. Often, we cannot intervene in the selection of inputs to find out how to influence the output (less water → smaller apple). However, when it comes to AI systems, for which we often only provide input unconsciously, things get trickier: users of AI systems that are already running can only try to explain how the system makes its decisions based on a limited number of past decisions made by the system. We can only test or estimate which parameters and factors play a role in this process – this is where Explainable AI (XAI) comes into play. XAI describes a growing number of methods and techniques for making complex AI systems explainable. Explainable AI is not a new topic. Efforts in the field of explainability have been underway since the 1980s. However, the steadily growing number of applications and the increasing establishment of ML methods have significantly increased the importance of this area of research. Depending on the algorithms used, these are more or less promising. For example, a model based on linear regression is much easier to explain than an artificial neural network. Nevertheless, the XAI toolbox also includes methods for making such complex models more explainable.

Advances in hardware have also greatly facilitated access to explanatory models. Today, it is no longer as time-consuming as it was twenty years ago to test thousands of explanations or carry out numerous interventions in order to understand the behavior of a model.

Examples of XAI in practice

In theory, XAI methods and the right know-how can be used to interpret even complex black-box models, at least in part. But what does this look like in reality? In many projects or use cases, interpretability is an important pillar of overall success. Security aspects, lack of trust in model results, possible future regulations, and ethical concerns make interpretable ML models a necessity.

We at [at] are not the only ones who have recognized the importance of these issues: European case law also plans to make interpretability a mandatory feature of AI applications in safety-critical and data protection-relevant fields. In this context, we at [at] are working on a series of projects that use new methods to make our AI applications and those of our customers interpretable. One example is the AI Knowledge project, which is funded by the German Federal Ministry for Economic Affairs and Climate Protection with €16 million. Here, we are working with our partners from industry and research to make AI models for autonomous driving explainable. Closely related to this topic is reinforcement learning (RL), which has been very successful in solving many problems, but unfortunately often represents a black box. For this reason, our employees are conducting research in another project to make RL algorithms interpretable and secure.

In addition, our data scientists are working on a toolbox to make the SHAP method described above usable for very complex data sets. All in all, at [at], we can offer a very well-founded catalog of methods for the interpretability of AI models, derived from current research. Whether it's the development of a new and interpretable model or the analysis of your existing models, please feel free to contact us if you are interested.

Share this post: