Artificial neural networks, or KNNs for short, are, simply put, units for processing information. In this context, their functional principle is so effective that they become a the Foundations for the development Artificial intelligence better said by machine learning.

Their special feature is that they do not function in a predefined, always the same way - like a pocket calculator, for example - but that they are capable of learning. That is why they can process inputs as the basis for calculations that are not unique in themselves. Only in combination with many other factors do they provide an unambiguous result, such as in the evaluation of symptoms for the diagnosis of diseases.

Typically, artificial neural networks are therefore familiarised with certain rules during a training phase using test data, which they can later apply and adapt automatically. This text aims to explain in principle what artificial neural networks are, how they work and for what specific purposes they can be used.

Inhaltsverzeichnis

Biological basics and functioning of neural networks

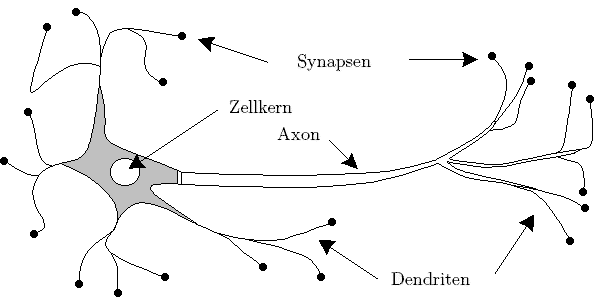

Artificial neural networks are an aspect of artificial intelligence - more precisely, a subcategory of machine learning. Their name is derived from an analogy: The operating principle of artificial neural networks is derived from nature. They are replicated neuronal cellswhich, for example, also form the basis for signal processing in the human brain or spinal cord. A neuronal cell has three essential properties that are important: the synapses, the axon and the cell body.

The illustration shows a simplified representation of a human nerve cell.

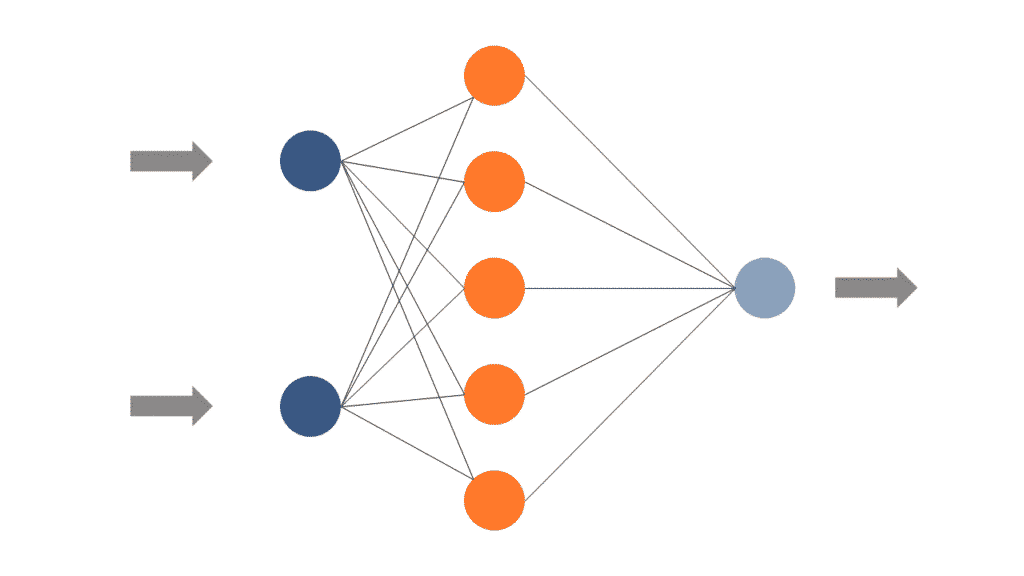

The functioning of natural and artificial neurons is very similar: The Synapses are the recipients of datan. When they perceive activity, they transmit this signal to the cell body. There, the signal strength decides whether the signal is transmitted via the axon. In artificial neural networks, too, the individual signals can be weighted and summed in an analogous way:

The figure above shows a schematic representation to illustrate the function of the nodes in a KNN. Source: (hs-bremen.de)

The complexity of the brain and the hidden layers

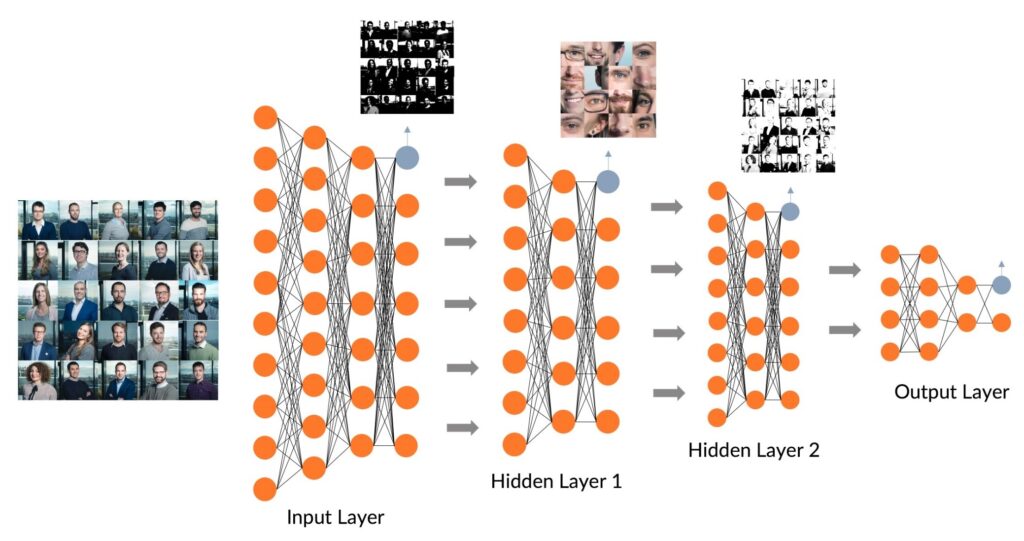

Similar to the human brain, where neurons do not work individually but are connected in very complex networks, artificial neural networks also work in a network. More precisely, this network consists of a more or less large number of layers:

Here, in principle Input Layer, Hidden Layers and Output Layer distinguished from each other. How exactly the signal travels from the input layer to the output layer depends very much on the learning rules that are modified during the training phase. Just like in the human brain, these networks of artificial neurons can learn to interpret signals differently.

The difficulty with speech recognition, for example, is that identical signals such as the simple word "to" can have different meanings. In some cases the word means "closed" ("The shop is closed") and in other cases the word takes on the function of a preposition with a spatial meaning ("Someone is coming to us.").

In order to be able to distinguish such cases, neural networks need feedback from outside ("Feedback Model"). During the training phase, neural networks receive feedback from humans as to whether they have solved a case correctly or incorrectly. However, there are also self-learning neural networks ("Recurrent model") who can draw conclusions independently on the basis of previous analyses.

For example, if a successful strategy is to be learned in order to win at chess, an algorithm plays against itself again and again. In this way, it can compare each game with the previous ones. Each time, it automatically receives feedback on the success of the new strategy.

The "depth" of KNNs

The crucial thing in KNNs are the hidden layers. In contrast to the simplified representation in Figure 3, there are usually not just one or two hidden layers between the input and output layers, but many. This is also referred to as the "depth" of an artificial neural network. This is also the origin of the term "deep neural network" or "deep learning". Figure 4 shows a schematic representation of a more complex KNN with a greater depth, using the example of Image recognition.

The more layers and the more nodes, the more complex tasks can be solved by artificial neural networks. But KNNs do not play out their advantages exclusively when it comes to complexity. Another special property of machine-learning algorithms, even if they do not work with KNNs, is more generally their Ability to learn.

What is the significance of neural networks for the economy?

The greatest strength is that they be trained for a specific task can. Once they are trained, they can manage this task significantly better than humans. However, this finding does not usually mean that they replace humans. Rather, artificial neural networks can perform tasks on a scale that is simply impossible for humans.

If, for example, the task is to index a database with 34 million individual images according to their content, a human being would need months or even years for this task. Once an artificial neural network has been properly trained, it can complete tasks like this in just a few hours.

Use Cases

Due to the ability complex questions To be able to answerNumerous use cases can be derived from this. In the context of medical diagnostics, artificial neural networks can be trained to interpret symptoms or to evaluate X-ray images, ultrasound scans or MRI images. While doctors are constantly coming up against limits in image diagnostics, in the same process Algorithms better and better.

Also for Classification tasks in different areas neural networks are ideal for this purpose. Be it in credit rating checks at banks or in quality controls, for example in the food industry, where fruit and vegetables have to be sorted according to certain criteria. In addition, neural networks are particularly suitable for:

- Speech generation and speech recognition: telephone banking, foreign language translation, automatic announcement texts

- Future forecasts and trend determinations: Weather, share prices

- Self-learning control systems: robotics, autonomous vehicles or production machines

- Pattern and character recognition: image content, writing, faces

- Game development, entertainment and culture: chess, skat, music composition, image creation like the app Prisma

- Optimisation tasks: Optimisation of travel routes taking into account variant factors such as traffic jam reports, weather data, traffic data, etc. or in the area of energy supply.

- Simulation: Training of robotic arms in virtual reality for subsequent use in the real world.

Artificial neural networks as a basis for machine learning

Deep Learning is one of the most successful forms of artificial neural networks. This learning method is called "deep" because the numerous hidden layers give the neural network a certain depth. There are numerous other forms or classes for implementing learning systems such as learning matrices, oscillating neural networks, Boltzmann machines or recurrent neural networks.

The ability to learn accounts for a large part of the current success of certain AI applications. They make it possible to learn from "experience", i.e. data, and - provided there is enough training data - to derive a general rule from a large number of individual cases and apply it to future cases. They thus not only provide the basis for machine learning, but also the reason for groundbreaking achievements in the field of artificial intelligence.

0 Kommentare