Inhaltsverzeichnis

Introduction

Artificial intelligence (AI) is revolutionising many facets of our daily lives and is a key driver of current developments in business and industry. Today, AI-powered algorithms solve tedious problems and use cases in an intelligent and efficient manner, often surpassing human performance in a variety of areas. The field of Reinforcement Learning (RL) is a central pillar of modern AI applications, ranging from RL agents that beat human players in complex games like Go or Chess to state-of-the-art language models, such as ChatGPT. It is not surprising that RL is also increasingly attracting interest in industry applications. The aim of this article is to provide an overview over the application of RL in industry and discuss the vast potential of RL as an optimization tool in various business problems.

There are several dominant machine learning algorithms that are used in industry. Supervised Learning tries to make specific predictions based on pre-defined labels. A classic example is object detection, where the task is to classify whether a given image contains objects like pedestrians or traffic lights. The training is performed by providing labeled examples (images) and finding patterns that can be applied to classify unseen examples.

3. Unsupervised Learning tries to find structure in data without any examples. For example, we might need to figure out which images contain similar situations and cluster them.

While supervised and unsupervised learning focus on understanding a situation, various problems (e.g., self-driving cars) are not just about understanding, but also about figuring out how to act on a situation (accelerate, brake, steer, etc.). Such optimisation and control problems, where appropriate actions are mapped onto specific situations, are the hallmark of Reinforcement Learning. For a more general introduction into the theoretical background of reinforcement learning and its key concepts you can read our terminology blog.

For an in-depth technical introduction to reinforcement learning that gives you a basic understanding of reinforcement learning (RL) using a practical example, see our blog post:

Reinforcement Learning in business: Where is RL used?

What types of business problems can be solved with reinforcement learning? As mentioned earlier, RL is a powerful optimisation tool, which means that RL is particularly suited to problems where we know the structure and the desired goal state, but do not know how best to achieve that desired state. Importantly, unlike other techniques such as supervised learning, with RL we do not need to know a "ground truth" about how to solve a problem.

Imagine there is a robot that needs to learn how to navigate from a specific starting point to a goal while avoiding certain obstacles. One option would be to learn from example paths that define the way from start to goal (walk three steps straight, then turn right, then straight again, ...) as in supervised learning. This would imply that the robot would not learn to find optimal sequences itself, but rather that we feed the robot with specific instructions about how to act in different locations. This may sound like a reasonable idea at first but will be highly impractical in realistic cases. Often, we know our starting point and goal, but we can’t specify the exact (or optimal) path from start to goal – we require an agent that can learn to find an optimal path by itself. Further, even if we knew the correct solution, it is unlikely that our robot could generalize its knowledge to other similar situations, such that even a tiny change in the environment will leave the robot inflexible and dysfunctional.

Generally, such supervised learning techniques provide a powerful framework for problems like computer vision but are inadequate in more flexible sequential optimisation tasks. Instead, we need our robot to explore the environment and learn from trial-and-error, based on feedback about good and bad movements. Therefore, we don't need to provide solved examples as training data, and instead provide our robot with a flexible mechanism for continuous learning: if the environment changes, this will be reflected in changing feedback that our robot receives and will cause it to re-learn the path from start to goal. This approach corresponds to reinforcement learning, which provides a powerful and general framework for solving real-world problems.

For a compact introduction to the definition and terminology behind reinforcement learning, read our basic article on the methodology:

Reinforcement Learning Use Cases in Industry

Just, apart from telling robots how to achieve goals, how can reinforcement learning be used in realistic industrial use cases? There are many examples of reinforcement learning use cases in industry, some of which we will describe.

Because reinforcement learning provides a powerful and general optimisation framework, it has great potential for solving problems with many moving and interacting parts, such as in the energy sector.Here, RL can be used to optimise processes from both a provider and consumer perspective. As a provider, RL can be useful for learning how to adapt to expected future energy demand and for optimising response programmes that encourage customers to save energy. On the consumer side, RL is a state-of-the-art tool to optimise energy consumption by finding the right balance between minimising costs and providing sufficient energy for processes such as heating or lighting. It can also be used to learn how to optimally adjust wind turbines or solar panels to maximise energy production, or to find the ideal balance between minimising the cost of charging or storing energy and providing sufficient energy, for example in batteries.

Use Case #1: Reducing energy consumption in data centres

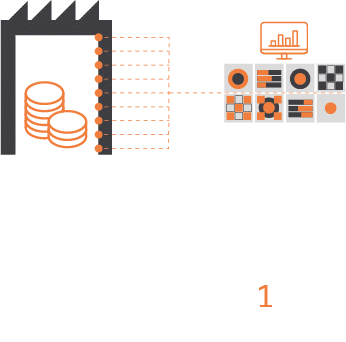

A particularly convincing use case example of using RL in energy is the reduction of energy consumption in data centres. Currently, data centres make up around 2% of global energy demand, but might be responsible for around 8% of global energy consumption by 2030 (and around 21% if we include other parts of information and communication technology, see nutanix.com and www.nature.com). To address this issue, Google DeepMind developed a system based on RL to reduce energy consumption of data centres. As shown in figure 1, this system consists of three key steps: a cloud-based system reads out information from the data centre cooling system to create a complex state representation. Based on this state representation, a deep neural network predicts the future energy efficiency and temperature based on proposed actions (i.e., changes in the cooling system), reflecting the value of different actions. Based on these computed values, the RL system chooses the best action that optimises energy efficiency whilst ensuring safety constraints. Based on this RL system, energy demand from data centres could be reduced by 30% Safety-first AI for autonomous data centre cooling and industrial control (deepmind.com) and the proportion of energy used for cooling could be reduced by 40% ( DeepMind AI Reduces Google Data Centre Cooling Bill by 40%).

Every five minutes, the cloud-based system takes a snapshot of the data centre cooling system (state s) from thousands of physical sensors

Information (state s) is fed into a deep neural network that predicts future energy efficiency and temperature based on the proposed measures (Q-values).

Selection of measures (policy) that meet the constraints and minimise energy consumption

Optimal actions are sent back to the data centre, the local system checks them against its own security specifications

Figure 1. An RL-based system to optimise energy demand in data centres developed by Google Deepmind (Safety-first AI for autonomous data centre cooling and industrial control)

Additionally, more recently Google DeepMind has developed RL-based algorithms to optimise resource utilization of Google’s data centers and increase the efficiency of software development (Optimisation of computer systems with more general AI tools).

Use Case #2: Optimisation in the area of transport & logistics

We have seen that RL is a powerful framework for solving use cases in the energy sector and will play a central role in making our digital age more sustainable. RL is also critical in other domains where sequential optimisation problems are prevalent. For example, RL provides a powerful tool for solving problems in transport and logistics. Companies can use RL to optimise traffic control in public transportation by finding sequential actions that minimise delays or improve customer experience, such as in the context of managing delays, reducing required changes in trains, or controlling the timing of traffic lights. In logistics, RL is used to optimise supply-chain costs and discovering new optimal delivery routes and inventory management in warehouses. Here, the trade-off lies between ensuring availability of products whilst reducing storage and delivery time.

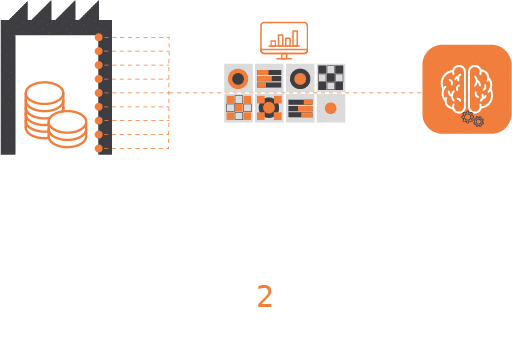

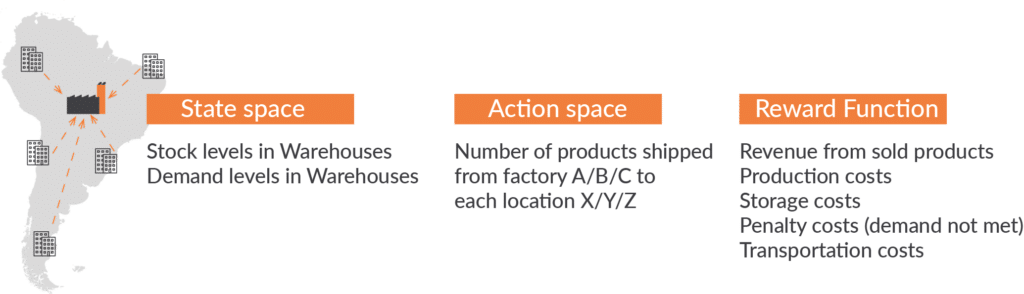

RL is a common framework for supply chain optimisation used by companies like Amazon. The key idea is to use RL to find the best distribution of products to warehouses and (if required) the right amount of production of goods in factories (Optimising the supply chain network - cutting through complexity to maximise efficiency). Here, the state space is comprised of the stock and demand levels in the different warehouses, and actions reflect the distribution and movement of products between the warehouses or from production sites to warehouses. RL is then used to find actions that optimise a reward function, which is often a balance between revenue from sold products and different types of costs, such as production, storage, or transportation costs as well as (large) penalty costs for not meeting the demand of customers (Reinforcement learning for supply chain optimization. Figure 2 provides an overview over this specification (adapted from Reinforcement learning for supply chain optimization). RL is highly successful in solving such supply chain optimisation tasks and an area of much recent research interest -A Reinforcement Learning Approach for Supply Chain Management, A review on reinforcement learning algorithms and applications in supply chain management).

Figure 2. An RL-based system for supply chain optimisation (adapted from Reinforcement learning for supply chain optimization)

Use Case #3: Finance and Banking

Beyond energy, transport, and logistics, Reinforcement Learning is also widely used in finance. Here, RL provides a powerful framework for stock trading and asset management. The prediction of future sales or stock prices is a key element of forecasting analyses in finance. However, forecasting models, such as methods based on supervised learning, do not tell us how to act in order to boost sales or maximise a profit margin. RL models, on the other hand, can learn how to optimise behaviour in order to achieve a certain objective within fluctuating markets. A prime example of the use of reinforcement learning is automated stock trading (Blog JP Morgan). Importantly, using RL algorithms are not limited to learning how to maximise profit whilst ignoring risks. What exactly is optimised depends on the definition of the objective function in RL, which can be a mixture of aiming to optimise returns while controlling risk. This approach lies at the heart of RL applications in portfolio optimisation and risk management models. Further, RL is often applied in credit scoring models, which can learn to adjust credit limits based on dynamically changing risks.

Use Case #4: Autonomous driving

RL is also an indispensable tool underlying autonomous driving, where cars (and other vehicles) can learn to make better decisions in traffic. Autonomous driving is a prime example of state-of-the-art applications of machine learning and AI, and RL provides a central aspect of its application. Getting cars to navigate through traffic involves a myriad of sequential decisions such as lane changing, stopping at traffic lights, collision avoidance or parking. These decisions are based on acquired motion planning, trajectory optimisation and scenario-based driving policies, which can all be learnt with RL. But the use of RL in the automotive sector goes beyond autonomous driving. RL is equally relevant in optimising vehicle control assistance systems, and also provides an essential tool in predictive maintenance and quality control in automotive manufacturing.

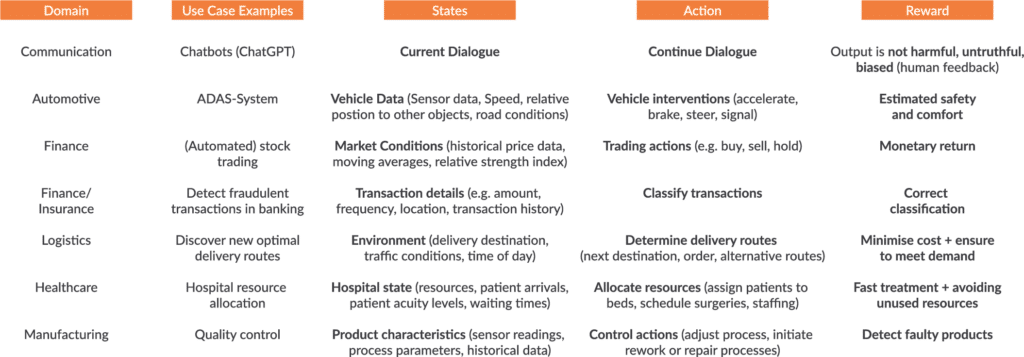

Figure 3 provides an overview over different domains and examples use cases of RL in industry.

In our Deep Dive, we highlight the interactions between business methods, neuroscience and reinforcement learning in artificial and biological intelligence.

Technical and practical approaches and current developments

But what does it take to use reinforcement learning in an industrial use case? To solve a problem with reinforcement learning, it is generally important to quantify the key components of the environment in a simulated RL environment. First, one needs to specify the state space, i.e. all possible circumstances the agent can be in. Returning to our original example of a robot learning to navigate, the state space would quantify all possible places the robot could be. A 'state' can also be more abstract, such as a particular financial market configuration or inventory status. In addition, it is important to specify the agent's action space, such as moving up, down, left or right. This action space determines what the agent can do to interact with a particular system. The basic goal is to find the best actions in that system to solve a particular problem. 'Good' and 'bad' actions are determined by a reward function that tells the agent which outcomes are good or bad. It is often assumed that agents receive a large positive reward for achieving a goal, a large negative reward for failure or risky behaviour (e.g. falling off a cliff or running out of energy), and a small negative reward for spending time searching for the goal.

A key aspect of applying RL in real-world industry problems is the use of so-called Digital twins . Digital twins are artificial environments that emulate a physical system in order to simulate and analyse its behaviour. We can use digital twins to train RL agents in a safe and controlled environment - in order to learn that falling off a cliff has severe consequences, it is preferable to make this experience virtually and not in real life. Working with digital twins allows us to simulate different and potentially rare scenarios for our RL agents and allow them to find appropriate solutions without the risk of actual damage to the system. Besides preventing possible negative consequences from risky behaviour during learning, using digital twins also enhances training performance.

There are also important challenges to address when applying RL to industry problems. As discussed above, it is also critical to get the reward (also called objective function of an RL agent right. If we design an agent that exclusively cares about reward maximisation, such as reaching a specific goal or maximising a profit margin, the agent might display risky behaviour, for example 'optimism in the face of uncertainty'. As a developer, it is therefore essential to define an objective function that not only considers positive gains of a problem but also accounts for negative outcomes, e.g. by specifying a high negative reward for unwanted and risky behaviour. This is where the usage of digital twins becomes particularly critical.

Current research also addresses questions about the explainability and interpretability of RL systems. As with any other machine learning algorithm, it is not only important to solve a specific task but also understand how it is solved. In the case of RL, we not only want to find an agent that makes choices that solve a specific problem, but also understand why it has made these specific choices. This question highlights the important link between research on RL and explainable AI systems, which is an area of much recent interest.

Learn how large language models such as ChatGPT are improved through the use of Reinforcement Learning from Human Feedback (RLHF).

Reinforcement Learning from Human Feedback in the Field of Large Language Models

Conclusion

In conclusion, the theory of reinforcement learning provides much exciting potential for solving sequential optimisation problems in industry. Having emerged from the field of behavioural science over 120 years ago, RL has continued to become a dominant framework in various disciplines as diverse as computer science, robotics, neuroscience, and business analytics. As outlined above, to cast an industry use case in a RL framework requires careful thought about how the problem can be specified in quantitative terms and the best way to train the RL agent. Once such careful consideration has taken place, the framework of RL provides a powerful tool to solve many different problems in industry and is likely to become increasingly dominant as an algorithm of choice in business domains as diverse as manufacturing, automation, finance, transport, logistics, predictive maintenance or healthcare.

0 Kommentare